We can type a sentence, wait a beat, and watch a micro-film materialize on-screen. Text-to-video magic has left the demo room and entered the budget meeting: 51 percent of video marketers already use AI tools to create or edit footage, according to Wyzowl’s 2025 State of Video Marketing report.

In this guide, we’ll share five practical workflows—plus the newest tools, from OpenAI Sora to AI art generation platforms like Leonardo.ai—so you can generate studio-quality clips without booking a studio.

Text-to-video breakthroughs and tools

Big players move fast

OpenAI Sora

- February 2024: beta rollout to safety testers.

- December 9, 2024: wide release for ChatGPT Plus and Pro subscribers; supports 20-second, 1080 p clips at the 20-dollar-per-month tier.

- September 30, 2025: Sora 2 adds in-scene audio and extends runtimes “well past one minute,” plus a controversial opt-out policy for copyright holders.

Google Veo 2

- Announced January 3, 2025. Early-access users can generate clips up to 2 minutes with improved character consistency, though pricing remains undisclosed.

Runway Gen-3 Alpha and others Start-ups aren’t sitting still. Runway shipped Gen-3 Alpha to paying users in September 2025, while Microsoft, Meta, and Adobe each launched public betas within the past 12 months.

For creators, the upside is speed: major models now add headline features such as longer runtimes, audio tracks, or identity controls in under 90 days on average, while entry-level plans still start at about 20 dollars a month. Text commands are replacing camera crews faster than most budgets can adapt.

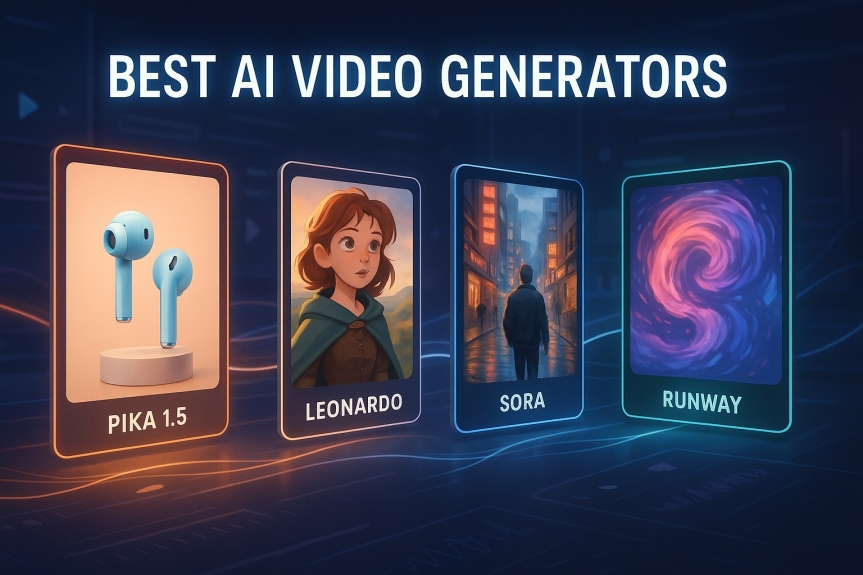

Best AI Video Generators

1. Leonardo.ai — Top pick for art-driven keyframes → motion

- Why pick it: Pairs strong image generation with motion workflows (great for style-consistent keyframes you can animate).

- Best for: Concept art, stylish teaser shots, and “paint-then-move” pipelines (e.g., keyframe in Leonardo → animate in a video model).

2. Runway Gen-3 Alpha — Fast scene shots for trailers

- Why pick it: Rapid 5–10s clips you can sequence into trailer-style edits; strong for quick ideation and B-roll.

- Best for: Multi-shot storyboards and reels (generate several short clips → edit in CapCut/Premiere).

3. Pika 1.5 — Give stills a pulse

- Why pick it: Image-to-video motion prompts (pan, zoom, subtle movement) to animate illustrations and product stills.

- Best for: Looping hero banners, animated social posts, and breathing life into static assets.

4. OpenAI Sora — Longer, cinematic text-to-video

- Why pick it: General-purpose text-to-video with longer runtimes and in-scene audio on newer versions.

- Best for: Single-prompt cinematic scenes and mood pieces to anchor a sequence.

How AI video generators work (in brief)

From prompt to moving pixels

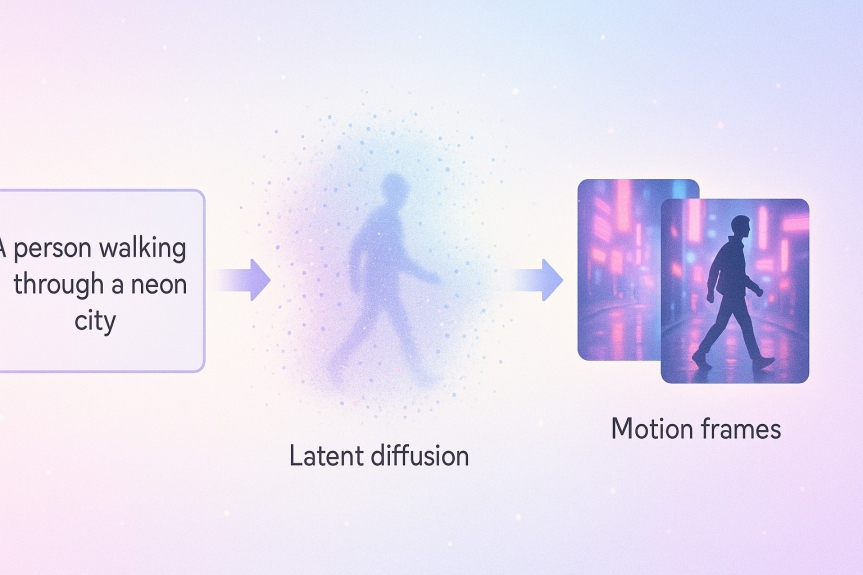

Think of a text-to-video model as a two-step assembly line:

- Language encoding. Your prompt (e.g., “A lone explorer walks through a neon-lit city in the rain”) is converted into vectors that describe objects, lighting, and camera angle.

- Frame synthesis. A latent diffusion network starts with pure noise, then iteratively denoises the canvas until the first frame appears, repeats the process for each subsequent frame, and checks temporal coherence so objects stay in place.

Most commercial models today output 5- to 60-second clips at 25–30 frames per second. According to Techopedia, OpenAI Sora tops out at 60 seconds, while Techopedia also reports that Runway Gen-3 Alpha caps clips at 10 seconds. Because every frame comes from the same vector recipe, swapping a single adjective (for example, “neon” instead of “moonlit”) reshapes color, mood, and even camera movement on the next render.

No cameras, no crew; just math turning tokens into motion.

Example 1 – spin a cinematic scene from a single prompt

Imagine opening Runway Gen-3 Alpha, typing “A lone explorer treks through a neon city at midnight, rain glistening on chrome streets, film-noir style,” and clicking Generate. About 45 seconds later, a 5-second, 720 p clip plays back: wide angle, blue halos, a trench-coat silhouette pushing through sheets of rain, as reported by TechCrunch. No cameras, just a prompt that doubles as your storyboard.

Creators stretch this further each day. One filmmaker tried “alien creatures roaming a lush forest at dawn, surreal lighting, 4 K.” The model responded with a cinematic fly-over featuring iridescent plants, soft god-rays, and an otherworldly beast drifting through pollen, according to TechCrunch and Techopedia.

You can test the workflow in minutes:

- Write a vivid sentence packed with nouns and active verbs.

- Add cinematic cues: camera angle, color palette, time of day.

- Generate, review, and tweak a word or two if the vibe feels off; that variability is a feature, not a bug.

Why it matters: rapid ideation. Storyboarders preview scenes before pitching, indie game teams test ambience without concept artists, and marketers grab atmospheric B-roll for campaigns—all for roughly five credits per second on Runway’s standard plan.

Example 2 – give static images a pulse

A single photo can now move. Upload an illustration to Pika 1.5 or Runway Gen-4 Video (Image + Text mode), add a motion prompt such as “slow push-in, trees swaying gently,” and the model predicts how each pixel should drift. Typical output: a 4-second, 720 p clip generated in about 15 seconds for 15 credits on Pika’s free tier, according to VentureBeat.

Creators stretch this tactic to extend their own art. One artist painted a hummingbird in Midjourney, then asked Pika to flap its wings; the resulting video preserved every brush-stroke but added lifelike motion, VentureBeat reports. The same workflow refreshes internet icons: upload the “Distracted Boyfriend” meme, prompt a subtle head turn, and the couple suddenly acts out the joke.

Keep moves modest: pan, zoom, and breathe. Large limb swings can introduce artifacts, so iterate—start small, review, and layer complexity.

For marketers, that means looping hero banners or animated product stills without a shoot; for storytellers, atmospheric establishing shots crafted in minutes, not days.

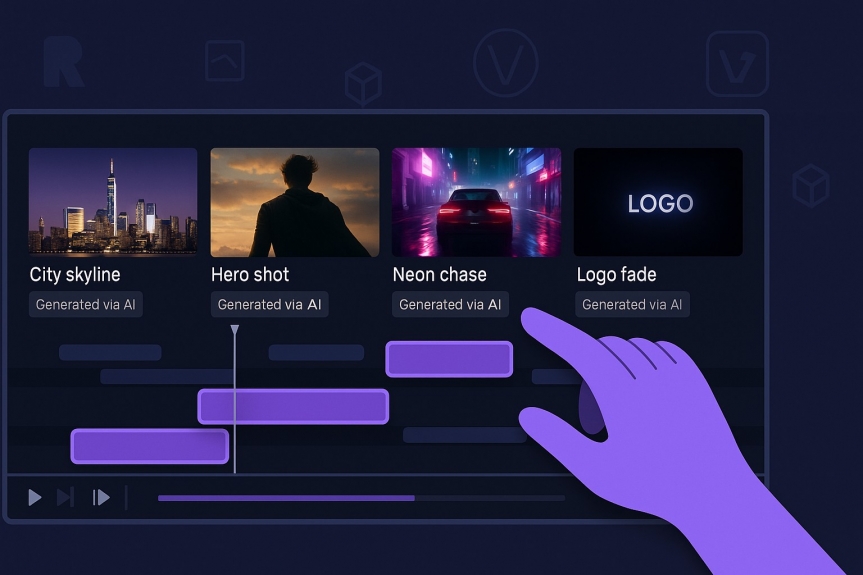

Example 3 – stitch multiple AI clips into a trailer-style sequence

One prompt makes a scene; several prompts make a story. With today’s generators you can draft a 30-second trailer in a single work session.

- Outline the beats. Sketch four to six headers: skyline opener → hero close-up → neon-alley chase → logo burn-in.

- Generate each shot.

- Runway Gen-3 Alpha: up to 10-second, 720 p clips; average render about 60 seconds.

- Google Veo 2: early-access users can create clips up to 2 minutes with a character-consistency toggle.

- OpenAI Sora (Plus tier): 20-second, 480–720 p limits; 50 videos per month for 20 dollars.

- Edit. Drop the clips into CapCut or Premiere, trim to 2- to 5-second shots, add motion-matched swooshes, then layer an AI-generated synth bed from Soundraw or Suno.

Proof of concept: designer Max Escu’s sci-fi teaser The Outworld combined Leonardo-AI keyframes, Runway Gen-3 motion, and Suno music, pulling more than 200,000 reposts on X in 48 hours.

Pacing tips: five-second takes feel cinematic, while two-second flashes build energy. End with a one-second title card that lingers long enough for recall, then hit render and share.

Example 4 – put an AI presenter on camera with no filming

Need a talking head but not the studio? Type a script, pick an avatar, and the platform renders a presenter who speaks in your chosen language.

Synthesia Enterprise

- Library: 230+ stock avatars and 140+ languages/voices (March 25, 2025).

- Pricing: Starter plan 29 dollars per month for up to 10 minutes of video.

- Users: More than 50,000 teams rely on Synthesia for training and comms, including Reuters and Xerox.

Competitors use the same three-step flow:

- Colossyan (70–170 avatars, 70+ languages, from 19 dollars per month)

- Elai.io (80+ avatars, 75+ languages)

- Hour One (virtual news anchors in 100+ languages)

Script for realism: write as you speak, break long sentences, and use the dashboard’s pronunciation tags for acronyms or brand names.

Why avatars? Scale. Clone a video into Spanish, French, and Thai in minutes, or let a camera-shy founder address the team without retakes. The output looks like a studio shoot without cameras, lights, or travel, giving small teams a professional presence around the clock.

Example 5 – turn long-form text into snackable video summaries

Long reads often die in forgotten browser tabs. Feed that same content to an AI summarizer and it returns a bite-size video fit for scrolling feeds.

Here’s the loop:

- Ingest. Paste a blog-post URL into Pictory or Lumen5.

- Pictory’s Starter plan is 19 dollars per month for 200 video-minutes and exports clips up to 30 minutes.

- Lumen5 caps each video at 10 minutes, plenty for social teasers.

- Draft. The model lifts headline sentences, matches each point with stock footage, overlays captions, and drops in background music. A 60-second draft typically renders in under 2 minutes on Pictory (internal benchmark shown during onboarding).

- Polish. Highlight must-keep lines, swap any mismatched B-roll, add your brand kit, then export square for LinkedIn or 9:16 for Reels.

Newsrooms push this further with GliaCloud’s GliaStudio, which ingests raw sports stats and delivers highlight reels before the score is final, trimming edit time from hours to under 5 minutes, according to company case studies.

Marketers win the 1-to-many game: a single 1,200-word post spawns a 60-second teaser, a looping GIF, and an SEO-friendly embed back on the article page, turning 5 minutes of reader attention into 15 seconds of motion that points viewers to the deeper dive — find out how AI video tools to create, personalize, and deploy marketing content faster enable campaigns at scale.

Conclusion

Marketers win the 1-to-many game: a single 1,200-word post spawns a 60-second teaser, a looping GIF, and an SEO-friendly embed back on the article page, turning 5 minutes of reader attention into 15 seconds of motion that points viewers to the deeper dive.